Executives want AI gains. They’ve read the headlines. They’ve seen the demos. They’ve watched competitors post wins. Now they want their teams shipping faster. That’s the right direction. But AI isn’t magic dust you can spinkle on a tired codebase. It’s an investment in tooling.

I use AI coding tools every day. I’m building an AI agent orchestration platform. When AI works, it’s extraordinary. I’m not here to downplay that.

When people read “Spotify’s engineers don’t write code anymore” they conclude the answer is to just use AI.

That conclusion is wrong. The data is starting to prove it. The reason has nothing to do with how good the models are. Most AI coding success comes from greenfield work. Most engineers don’t work in greenfield.

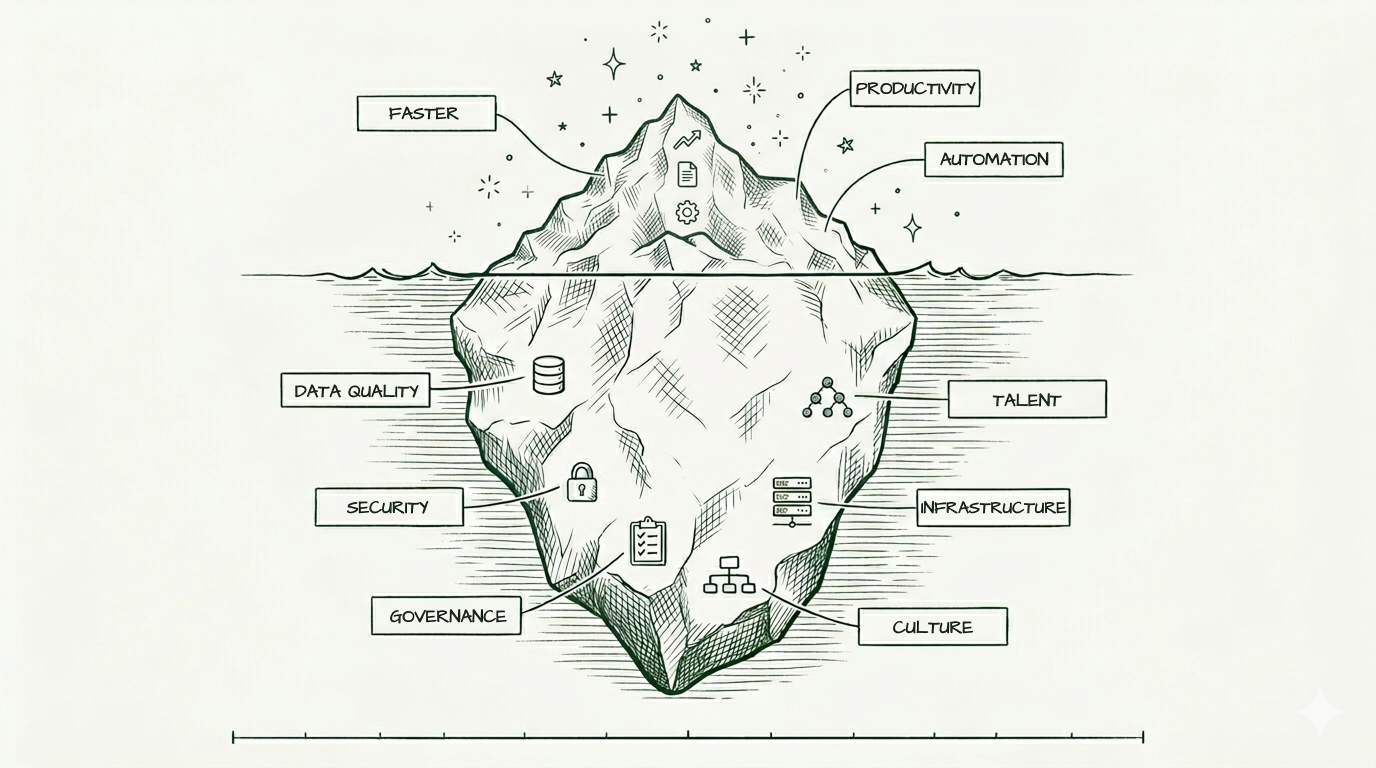

Greenfield versus the iceberg

AI works great in greenfield projects. Empty repository. Fresh context. No constraints. No history. The model has everything it needs because there’s nothing it doesn’t have. You ask for a Next.js dashboard with auth and a payment flow. It builds you one in an afternoon. The demos and viral wins come from this mode.

Now look at what most engineering work is.

A legacy codebase is like an iceberg. The code in front of you is the small part above the waterline. The bulk of what matters lives elsewhere. Why this service owns that table. Why we wrap that SDK instead of calling it direct. Which module looks harmless but holds up three downstream consumers. That knowledge sits in senior engineers’ heads. In three-year-old Slack threads. In post-mortems nobody re-read. In decisions that were obvious at the time and are now invisible. The model can read every file in the repo and still miss the one fact that matters.

AI works on icebergs too. It just needs much more upfront work to get there. The context the model gets for free in greenfield has to be built and maintained in legacy. That’s the part nobody talks about in the demos.

That’s why the same AI tool that builds a clean prototype can produce broken code in your production codebase. Same model. Same prompts. Different problem.

What changes when the codebase is real

Three things bite hardest in legacy work.

The AI doesn’t know what your senior engineers know. This is the iceberg problem made operational. Your senior engineers carry the why. What we tried before, what burned us, why this pattern instead of that one. The AI doesn’t have access to any of that. It defaults to the average of its training data, not your conventions. Without strong rules and examples to anchor it, AI produces code that looks plausible but doesn’t match what’s around it. House style drifts. New patterns sneak in alongside old ones. Multiply that across a hundred pull requests and your codebase ends up with two competing styles. Worse for the humans reviewing it, worse for the next round of AI working on top of it.

AI speeds up writing. Everything else stays the same speed. A clean diff in a fresh repository is easy to review. The same diff touching six legacy modules isn’t. Someone has to verify it doesn’t break invariants, regress performance, or trip a regulatory line. Writing was never the bottleneck in legacy work. Reviewing was. AI widens that gap. And the agent itself only gets better when it can run, test, and verify its own work. Which means it’s only as effective as your test infrastructure. If your tests are slow or unreliable, the AI can’t tell when it’s wrong, and a human has to babysit every change.

The cost of being wrong is asymmetric. A mistake in greenfield means a rewrite. A mistake in production legacy means an incident, a rollback, a regulatory finding, a board call. The risk-adjusted cost of an AI error in a mature system is an order of magnitude higher than in a prototype. That’s why the governance overhead — specs, reviews, staged rollouts, audit trails — isn’t friction to remove. It’s friction that exists for reasons.

What Spotify and Stripe built

This is also why the Spotify and Stripe stories get misread.

Spotify didn’t adopt an off-the-shelf coding agent. They built their own. Their engineering team needed a tool they could plug into the rest of their infrastructure. Something that lets them swap models and wrap AI in their own quality controls. None of the tools you can buy could do that.

Think about what that means. One of the most engineering-mature companies in the world looked at the available AI coding tools and decided none of them worked. So they built their own — on top of years of platform investment they’d already made. The AI is the small new piece sitting on top of a much larger system they’ve been building for over a decade.

Stripe’s Minions tell the same story. 1,300+ autonomous pull requests a week sounds impressive. Then you see what sits underneath. Years of investment in developer tooling. Strict guardrails around what the AI can do. A hard rule: if the agent can’t fix a failing test in two attempts, a human takes over.

What Spotify and Stripe did, in effect, is make their existing codebases behave more like greenfield from the AI’s view. Years of platform work made the institutional knowledge legible. The right way to build something became the obvious way. AI plugged into that and turned good practices into great ones.

They didn’t sprinkle AI on their problems. They didn’t even use the AI tools you can buy. They built their own, on top of years of platform work, because that’s what it takes to make AI work at scale.

The data is catching up

A 2026 Harness report surveyed 700 engineers and technical managers across five countries. The headline: 73% of engineering teams have no standardised templates or golden paths. Three quarters of teams are trying to adopt AI on top of bespoke, inconsistent foundations.

Among developers using AI tools multiple times a day, 51% report more quality problems and 53% report more security incidents than before. Not fewer. More.

Sonar’s 2026 State of Code survey backs this from the developer side, with 1,100+ respondents. Adoption has gone mainstream. 72% of developers use AI tools daily. 42% of all committed code is now AI-generated or assisted, on track to hit 65% by 2027.

But look at the trust numbers. 96% of developers don’t fully trust AI-generated code. Only 48% always verify it before committing. We’re shipping huge volumes of code we don’t trust, through verification layers that weren’t built for this speed.

The effectiveness gap is just as telling. 90% of developers use AI for new code. Only 55% rate it effective for that. 72% use it for refactoring. Only 43% find it effective there. AI shines at documentation, test generation, and code review. It struggles with the architectural work where judgement matters most.

The bottleneck didn’t disappear when AI arrived. It moved.

The bottleneck moved — to the bookends

The story most people tell is that AI eliminates work. The reality is that AI moves it. To the two ends of the process most teams have underinvested in for years: specification and review.

Specification. The blank page problem used to be writing the first line of code. Now it’s writing a good enough spec for the AI to work from. An AI agent only produces good code if it understands what “good” means in your context. That needs clear requirements, architectural constraints, defined contracts between services, and someone who knows what the right answer looks like. Vague specs in, bad code out. Now it’s bad code at 10x the volume.

I’m seeing this in my own team. A stakeholder asks for “a dashboard.” The spec gets written. The team builds it. The stakeholder says “that’s not what I wanted.” The spec said absolute numbers. The stakeholder expected funnel metrics. Nobody caught the mismatch. AI or no AI, that’s a specification failure. With AI, the cost of building the wrong thing drops to hours instead of weeks. That makes the mismatch invisible until you’re five iterations deep.

Review. Someone has to verify what AI produced is correct, safe, and maintainable. Sonar’s data suggests most teams skip this. 52% of developers admit they don’t always verify AI code before committing. That’s not laziness. That’s a review process that was never designed for this volume.

Both bookends need investment. Better specification discipline upstream. Stronger review tooling and culture downstream. “Generate code faster” alone just means failing in both directions at higher speed.

What “foundation” actually means

If you’re going to make your codebase the kind of place AI can work, here’s what the work looks like.

Standardised environments. Every engineer should be able to spin up a working dev environment in minutes. Same config, same dependencies. If developer onboarding takes a week of “figure out why it doesn’t build on your machine,” AI agents will hit the same wall.

Golden paths. Templates for how new services and features get built. Not a wiki page nobody reads. Real scaffolding that makes the right way the easy way. Without them, AI produces a new snowflake every time.

Clear ownership. Every service has a specific owner. Not a team-of-everyone. AI can write the code, but someone has to make the architectural calls and live with the consequences.

Real test coverage. Tests that catch regressions, run fast, don’t lie. AI-generated code is only as safe as your ability to verify it. Brittle tests mean you trust blind or review every line by hand. Both are bad.

Working CI/CD. If your deployment pipeline is held together with bash scripts and hope, AI ships broken things faster than you can roll them back.

Tacit knowledge made legible. Architecture decisions in writing. Conventions in linters. House style in rules files. The institutional memory layer needs to become machine-readable, or the AI works blind.

Most engineering organisations have none of this. A few have some. Almost nobody has all of it.

Where most teams actually are

I’ve been writing about AI coding as a spectrum of maturity. Four stages, from prompting to orchestrating.

Stage 1: prompting. Copilot-style autocomplete. Useful but it’s the floor.

Stage 2: assisting. Cursor, Claude Code. The AI is a pair programmer. Still engineer-led.

Stage 3: delegating. Engineers hand off bounded tasks to agents that run semi-autonomously, with review. This is where real productivity gains compound.

Stage 4: orchestrating. Engineers design systems of agents handling parallel work with structure and guardrails. This is Spotify. This is Stripe.

Most teams are between Stage 1 and Stage 2 and think they’re at Stage 3. The gap isn’t about how smart the AI is. It’s how much infrastructure you’ve built to work with it. You can’t delegate if your environments are flaky. You can’t orchestrate at scale without golden paths. Your foundation decides which stage you can reach.

In greenfield, you can skip straight to Stage 3 because there’s no foundation work to do. In legacy, the foundation work is the work.

Why leadership keeps getting this wrong

The pattern: a non-technical leader reads about Spotify on LinkedIn, sees a demo, feels competitor pressure, and tells engineering to “use AI more.”

This misses the point so badly it makes things worse.

AI is a force multiplier. It multiplies whatever you have, including your problems. Solid foundation, real leverage. Platform held together with tape and a team firefighting every week, you now get bad code at unprecedented speed. That’s not a win, even if velocity charts look great for a quarter.

There’s also a governance blind spot most leadership doesn’t see. Sonar found 35% of developers access AI coding tools through personal accounts rather than work-sanctioned ones. A third of AI usage happens outside whatever security, compliance, or quality guardrails the company thinks it has. “Just use AI” without sanctioned tooling is shadow IT in your codebase.

The companies winning with AI aren’t the ones who adopted fastest. They’re the ones who did the unglamorous platform work first. Backstage is ten years old. Fleet Management has been running for three. Stripe’s developer productivity infrastructure predates their AI initiative by years. Not lucky accidents. The point.

What this means if you’re leading

The real question isn’t “why aren’t we using more AI?” It’s “is our foundation ready for what AI will amplify?” If the honest answer is no, the fix isn’t more AI pressure on engineering. It’s investment in the boring infrastructure that makes AI possible.

That means funding platform teams who don’t ship user-facing features. Accepting that “standardise our dev environments” is a quarter of work without obvious output. Trusting your engineering leaders when they tell you the foundation needs attention before AI can help.

It also means investing in the bookends. The specification discipline that tells AI what to build. The review tooling that catches what it builds wrong. Sanctioned tools, clear policies, proper access. Not “figure it out.”

“Faster code generation” isn’t the goal. “Shipping better software faster, safely, at scale” is. AI is one tool for that. It’s not the tool.

Start now anyway

Here’s where executives are right. You can’t wait until your foundation is perfect before letting your team use AI. You won’t know where your foundation fails until you start putting AI through it. The mismatched dashboard spec, the AI agent that produced something off-style, the regression nobody caught — those aren’t reasons to slow down. They’re the diagnostic.

The teams that start now learn faster. They find the gaps in their tests, the missing rules files, the conventions that lived in someone’s head. They feel the review bottleneck and start investing in tooling for it. They notice when specs are too vague to produce good output, and they tighten up.

The teams that wait until they’re “ready” never get ready. The foundation work isn’t visible until AI puts pressure on it.

So: start using AI now. Pay attention to where it produces friction. Then invest in fixing those things — not a generic “platform modernisation” project, but the exact gaps your AI usage exposed. That’s the loop. AI as both the goal and the instrument that tells you what to build.

What you don’t do is mandate AI without giving the team time to do the foundation work. That’s the version that fails. The version that works is: use AI, learn from it, fix what it exposes, repeat.

Why I’m building an orchestration layer

This is why I’m building Pilot — an orchestration layer for AI coding agents.

Not because the world needs more AI hype. It doesn’t. Because the missing piece between “engineers using AI” and “organisations getting real leverage from AI” is structure. Guardrails. A way to enforce the engineering discipline that separates Spotify’s Honk from a team shipping bad code at unprecedented speed.

Companies with Backstage-scale internal investment will build their own. Everyone else needs something they can adopt. Something that gives them the guardrails without a decade of platform engineering first.

This is the gap Pilot is built for. Still early days, but if any of this resonates, join the waitlist and I'll keep you in the loop as it takes shape.

Join the waitlist →Where are you on this?

If you’re trying to make AI coding work for your team and hitting the same walls — I’d love to hear your story. I’m collecting real cases from people navigating this, and happy to help think it through.

Drop me a note.

Sources

- Harness, State of DevOps Modernization 2026 — press release

- Sonar, State of Code Developer Survey 2026 — report

- Spotify Engineering, Honk series — Part 1

- TechCrunch on Spotify’s AI coding announcement — article

- Stripe Engineering, Minions — blog post

This essay sits within a broader thesis on AI coding. See the full argument →