Agents only get useful when they can verify their own work. That’s the bit nobody can skip. Without it, the speed gains evaporate the moment the agent makes a confident mistake. Which it will, and the human has to read every line to catch it. With verification, the agent corrects itself before the human sees the diff.

Peter Steinberger calls this closing the loop. His work on OpenClaw is a clear demonstration of what it looks like in practice. I’ve been working on the same problem from a different angle, building Pilot. The principle is universal. The shape of the loop isn’t.

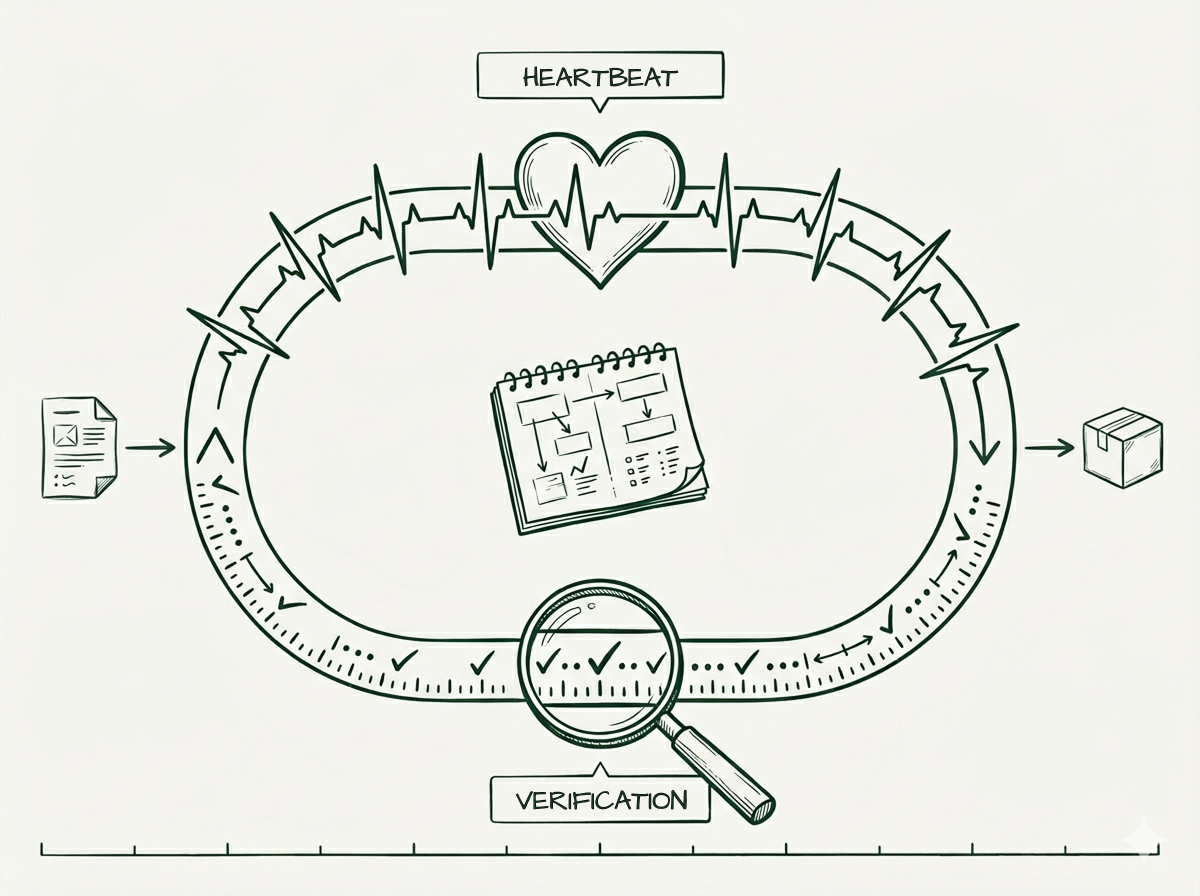

The loop has two halves

The loop is two things, not one. They’re easy to conflate and worth separating.

Verification is what gets checked. Tests run. Linters pass. The compiler is happy. The output matches the spec. Steinberger has invested a lot here. He picks CLI-first services (vercel, psql, gh, axiom) because agents can drive them and read their output. He builds custom CLIs when one doesn’t exist. The verification surface is rich, fast, and machine-readable. That’s the work.

Heartbeat is what advances state. What triggers the agent to act. What decides what happens next. For a solo developer, this is the developer — they prompt, the agent runs, they review, they prompt again. When it’s automated, it’s time-based or run-based. Simple alarms. Generic.

Both halves are right for solo work on personal projects. Tight verification, lightweight heartbeat, no compliance surface, no team coordination, no audit requirement.

The shape of the loop depends on what you’re building

This is where it gets interesting.

If you’re shipping a Twitter analytics tool by yourself, the loop is what Steinberger describes. Tests, lint, compile, eyeball the diff, ship. Anything more is overhead.

Take that same setup and point it at a customer-facing product with a team of eight, and the loop expands. Verification grows to include review — not as advisory, as a gate. The heartbeat gets shape: pipelines, environments, deployment windows. Multiple people need to know what’s happening and when.

Point it at software that touches patient data or financial transactions (DORA, GDPR, MDR, HIPAA, NIS2), and the loop expands again. Verification picks up sandbox isolation, governance review, audit trail capture, signed approvals at specific gates. The heartbeat stops being a clock. It becomes state-aware:

Wake when the spec is approved AND the test suite is green AND a human reviewer has signed off AND the audit log is written

That’s not a cron job. That’s a pipeline.

The principle Steinberger names — close the loop — doesn’t change. The shape of what gets closed does. And the shape isn’t a philosophical choice. It’s a function of what the system touches and who has to answer when something goes wrong.

Generic heartbeat, structured heartbeat

Here’s the part I think doesn’t get said enough.

A generic heartbeat — time-based, run-based, prompt-driven — makes a runner. It executes things. It’s flexible. It’s fast. What you want for exploratory work!

A semantic heartbeat — state-aware, pipeline-driven, fail-closed — makes a workflow. It executes things in a specific order, with specific preconditions, and refuses to advance when those preconditions aren’t met.

Most coding harnesses today — Claude Code, Codex, Cursor, Aider — are runners. That’s not a criticism. It’s a design choice that fits the work most of their users are doing. You can bolt structure on top with hooks, custom slash commands, CLAUDE.md conventions, custom CLIs. The structure is advisory. Nothing fails closed. The guardrails are walls you can paint on, not walls that hold weight.

For solo work, that’s the right default. The flexibility is the feature.

For team-scale work in regulated industries, the default inverts. You want the structure to be load-bearing. You want stages that fail closed. You want the heartbeat to refuse to advance when the spec isn’t approved or the audit log isn’t written. You want flexibility to be the exception you fight for, not the default you fight against.

What the next generation has to answer

The verification has to be real. The loop has to close. Teams not getting the desired results with AI coding agents are likely failing at this layer. Because they haven’t done the investment work. Steinberger has shown what doing it the right way looks like at one end of the spectrum.

The loop a solo developer needs to close looks very different from the one a regulated team needs. Same principle, different physics.

That’s the question the next generation of agent infrastructure has to answer. Not whether to close the loop. What the loop needs to include, given the work, the team, and the risk surface.

It’s the question Pilot is built around.

My next piece will review what tools start to look like when the loop has to be built in. When the heartbeat is state-aware, the pipeline fails closed, and the flexibility lives at the spec layer instead of the execution layer. There’s a second direction worth taking, and almost nobody is building it.

That one’s filed in two weeks. If you want it in your inbox the day it goes live, subscribe below. Otherwise, see you on LinkedIn.

This essay sits within a broader thesis on AI coding. See the full argument →